What the Web Didn't Deliver: High Economic Growth

Remember the year 2000, when all appeared to be smooth sailing in the global economy? It was a time of confident predictions of an epochal economic and political renaissance powered by information technology. Jack Welch—then the all-seeing chief executive officer of General Electric—pronounced the Internet “the single most important event in the U.S. economy since the Industrial Revolution.” The Group of Eight highly industrialized nations met in Okinawa in 2000 and declared, “IT is fast becoming a vital engine of growth for the world economy. … Enormous opportunities are there to be seized by us all.” In a 2000 report, the president’s Council of Economic Advisers said, “Many economists now posit that we are entering a new, digital economy that could inaugurate an unprecedented period of sustainable, rapid growth.”

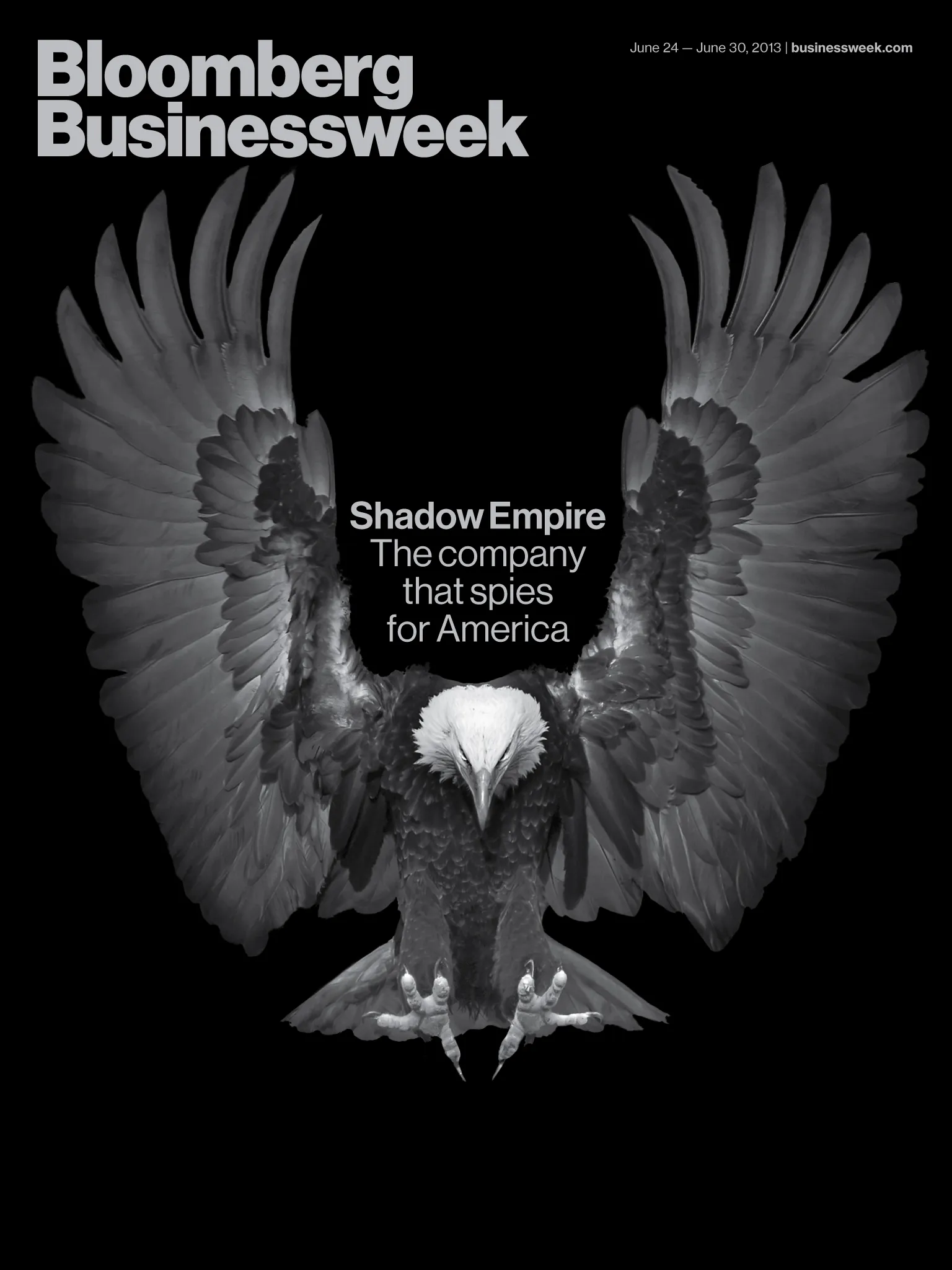

It hasn’t quite worked out that way. The Internet has had a dramatic impact on people’s lives and how they spend their time. It sparks uprisings, makes shopping easier, helps people find their soul mates, and enables governments to collect troves of useful data on potential terrorists—and, apparently, on their own citizens. What the last decade demonstrates, however, is that the information revolution hasn’t generated economic prosperity. It’s tempting to believe an innovation can unlock the secret to high growth. But that way of thinking is almost certainly wrong.